Published on

>1_30

- Draft- P1-2 – Background

- This week, my experiment starts from a scene in Flatland.(pause here and let everyone watch the clip.)

- In this scene, the son learns to recognise other shapes by watching how their edges change in length, instead of seeing the whole shape at once.

- In the world of Flatland, characters can’t see surfaces.

- They can only understand objects through edges, light, and shadow.

- Based on this idea, I wanted to see if I could use a 3D software to create a viewing condition that feels close to a two-dimensional way of seeing.

- Video available

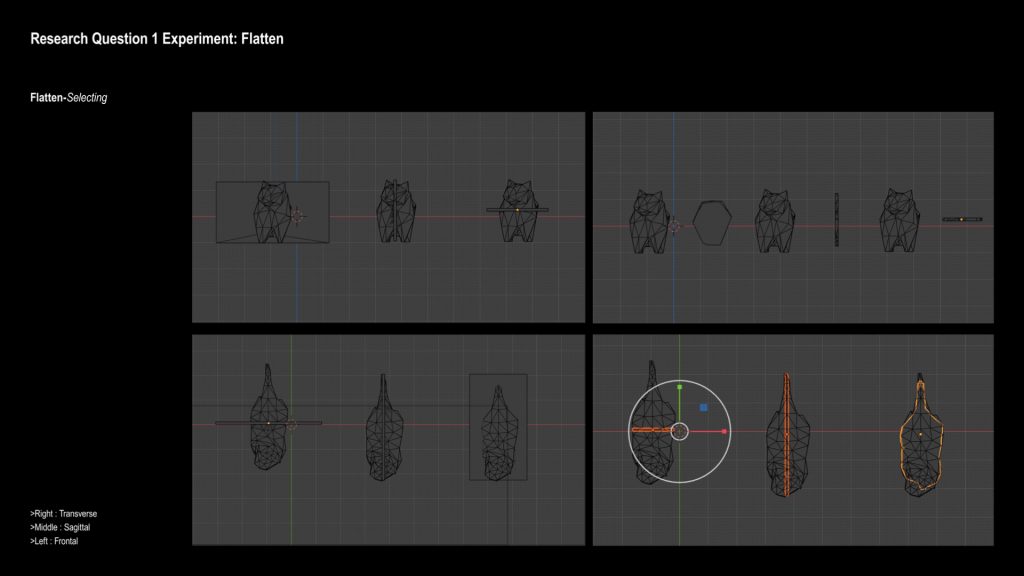

- Draft-P3 – Cutting the Model

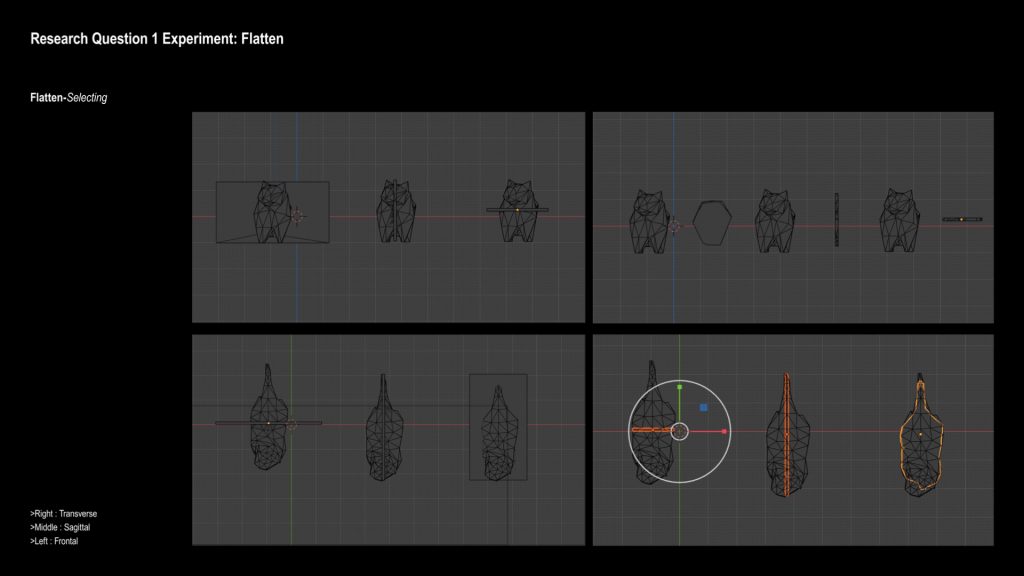

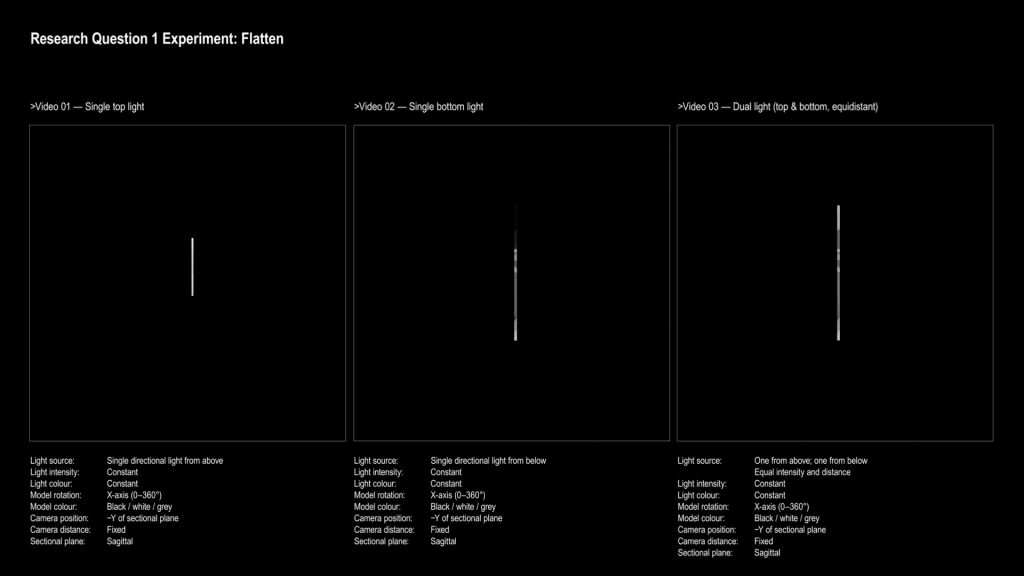

- To do this, I first had to cut the 3D cat model into a single slice.

- I realised that the way I cut the model actually matters a lot.

- It changes how the form is perceived.

- I tested three cutting directions:

- transverse, frontal, and sagittal.

- I chose the sagittal cut, because visually it felt closest to the viewpoint shown in the film.

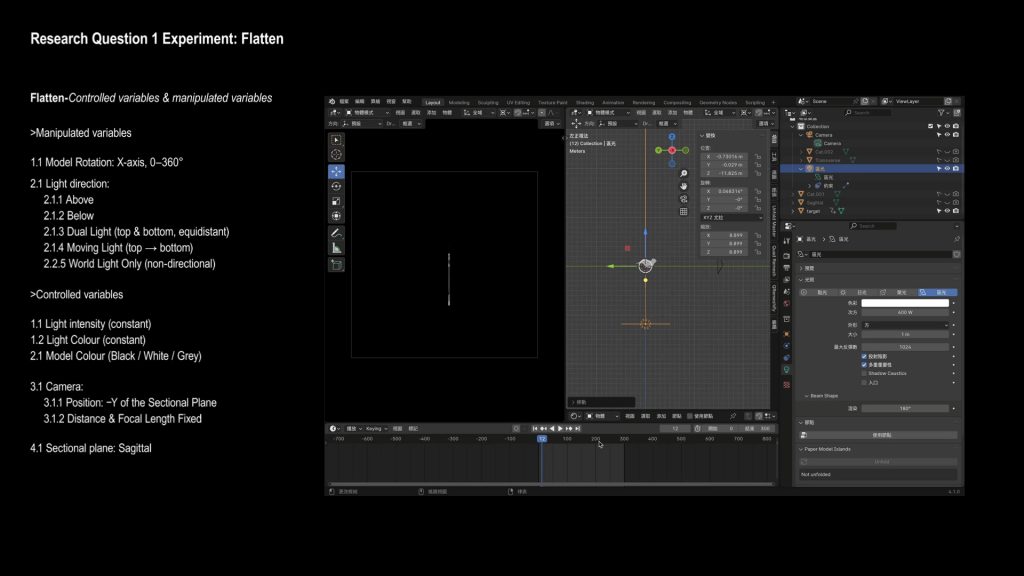

- Draft-P4 – Rotation

- After fixing the cut, I let the model rotate.

- This comes from an idea in the film:

- if you can’t recognise something from one view, you might understand it by moving around it.

- So I let the model rotate 360 degrees, and watched how the line shape changes over time.

- I fixed the camera in a front view and limited everything to black, white, and grey, so colour wouldn’t affect recognition.

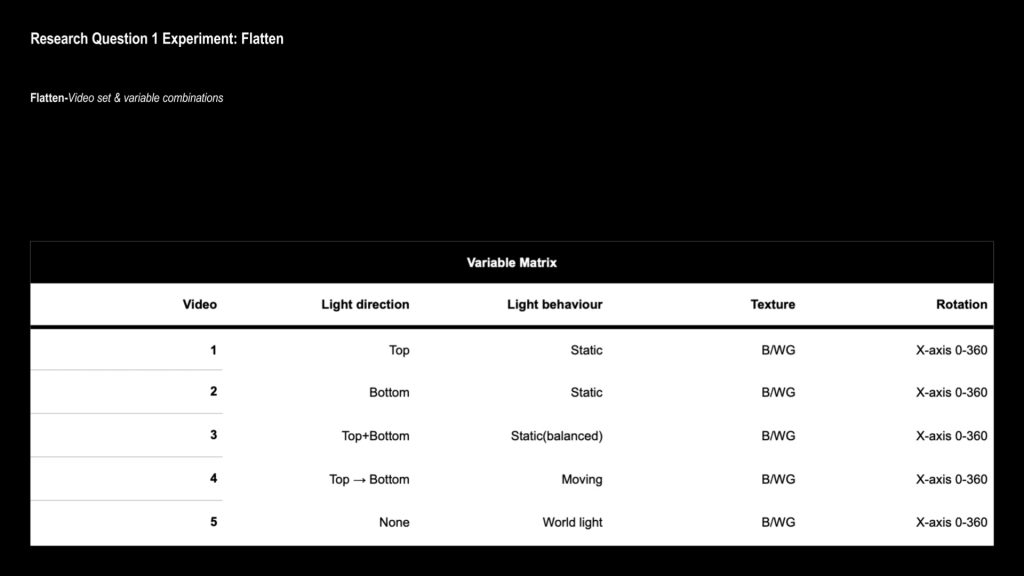

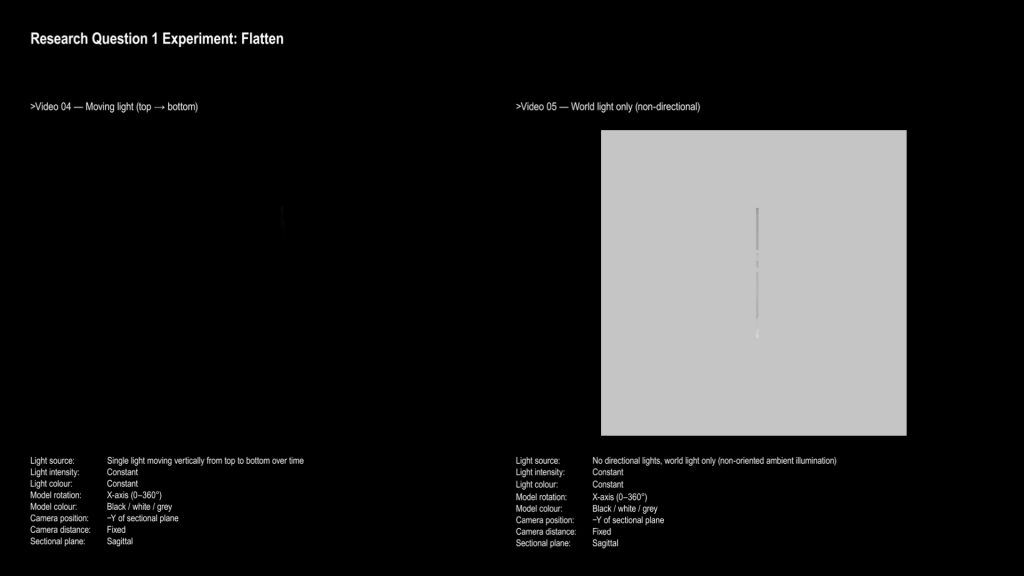

- The two main things I changed were the light direction and the rotation angle.

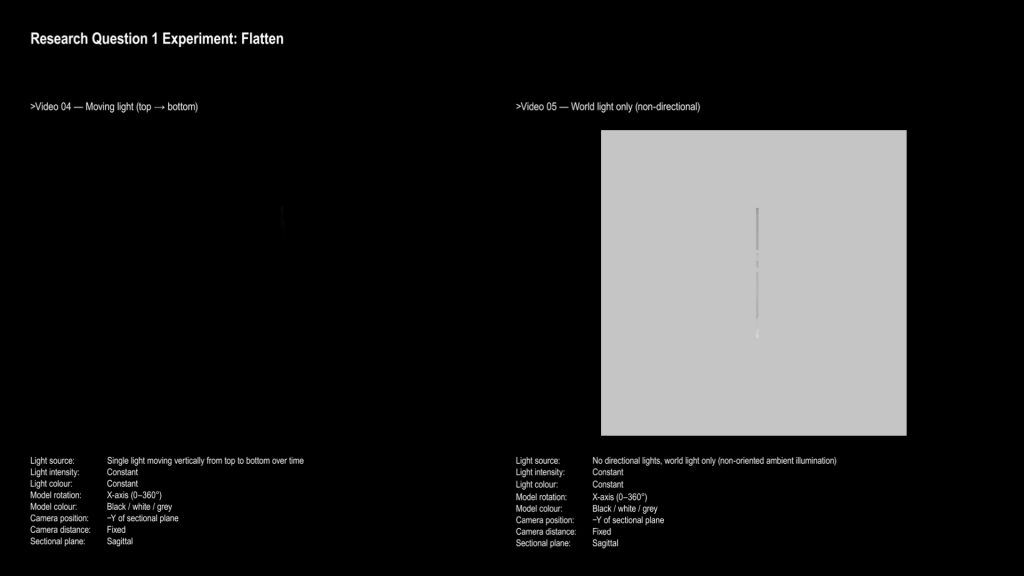

- I also tested a world light, which has no clear direction and lights the object evenly.

- Video available

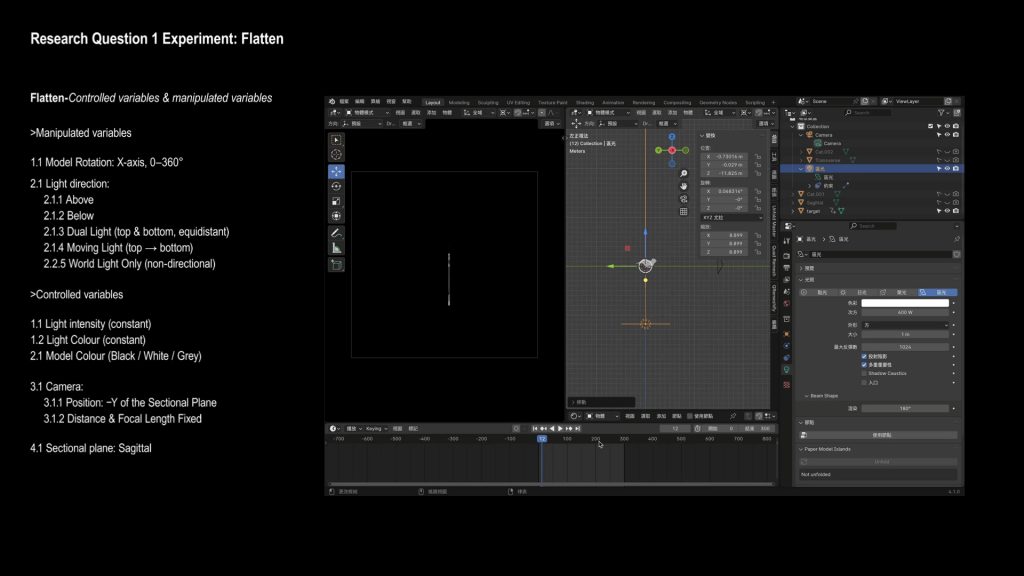

- Draft-P5 –Setup

- So I let the model rotate 360 degrees, and watched how the line shape changes over time.

- I fixed the camera in a front view and limited everything to black, white, and grey, so colour wouldn’t affect recognition.

- The two main things I changed were the light direction and the rotation angle.

- I also tested a world light, which has no clear direction and lights the object evenly.

- Draft-P6–P7 – Result of Experiment 1

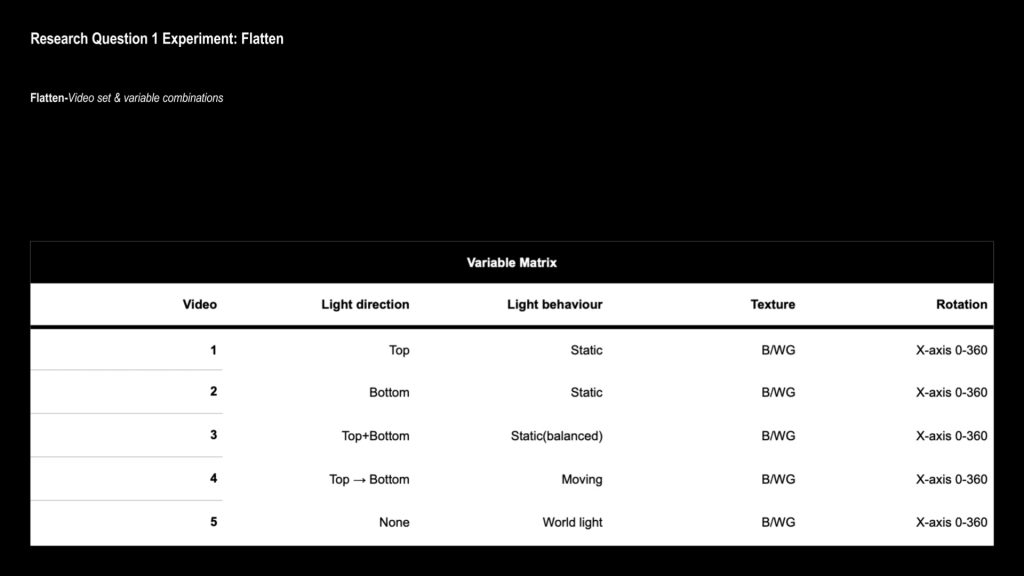

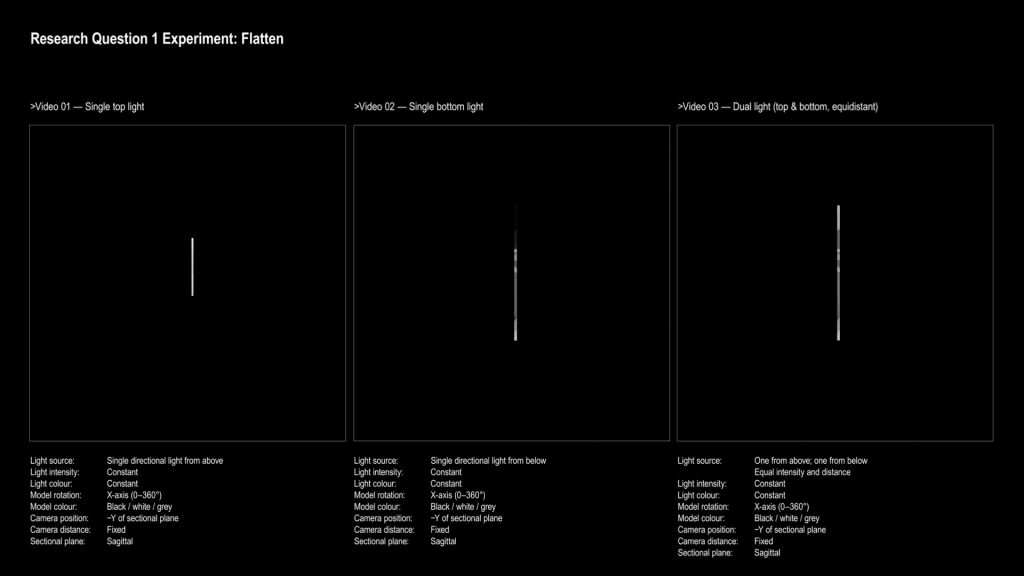

- In the end, I exported five short videos.

- Each one shows how this line-like form changes under different lighting and movement.

- These videos are the result of my first question, about how we recognise objects when visual information is reduced.

- Video available

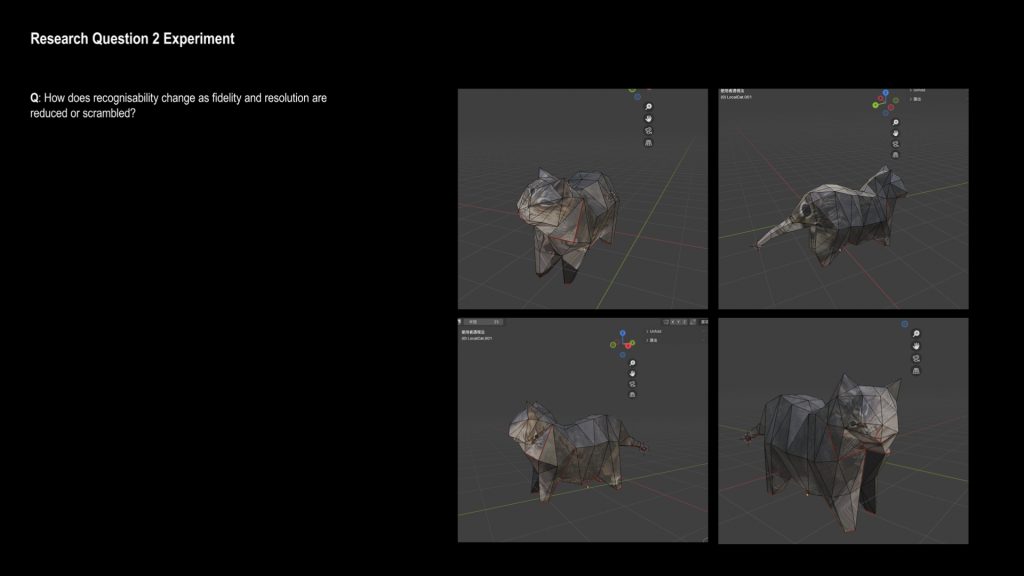

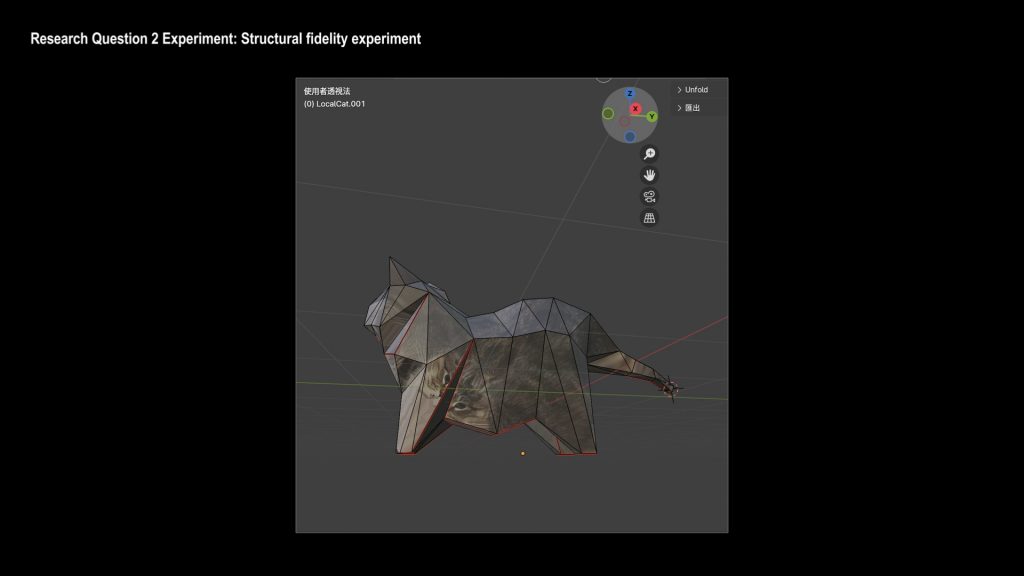

- Draft-P8 – Experiment 2: UV Mapping

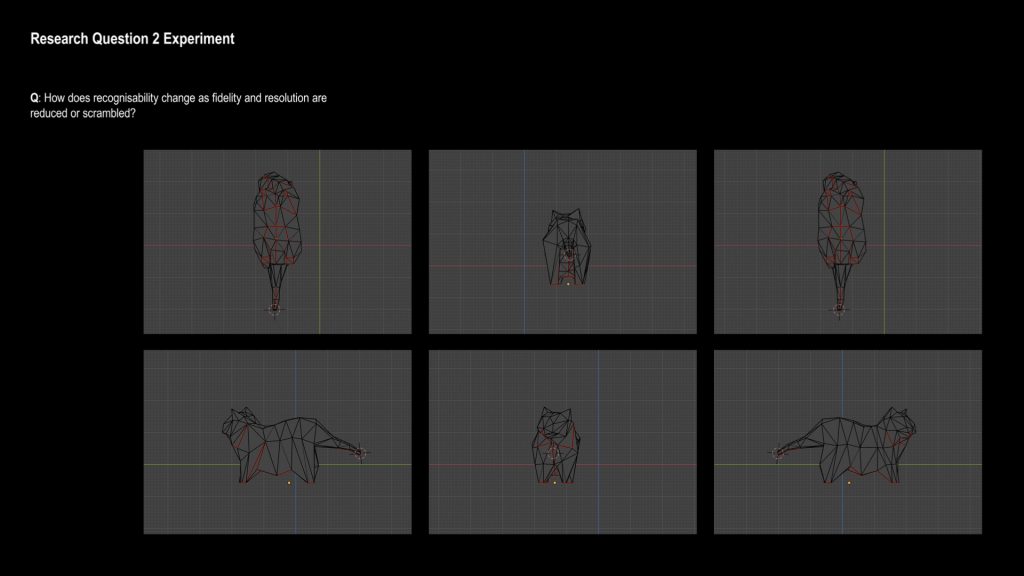

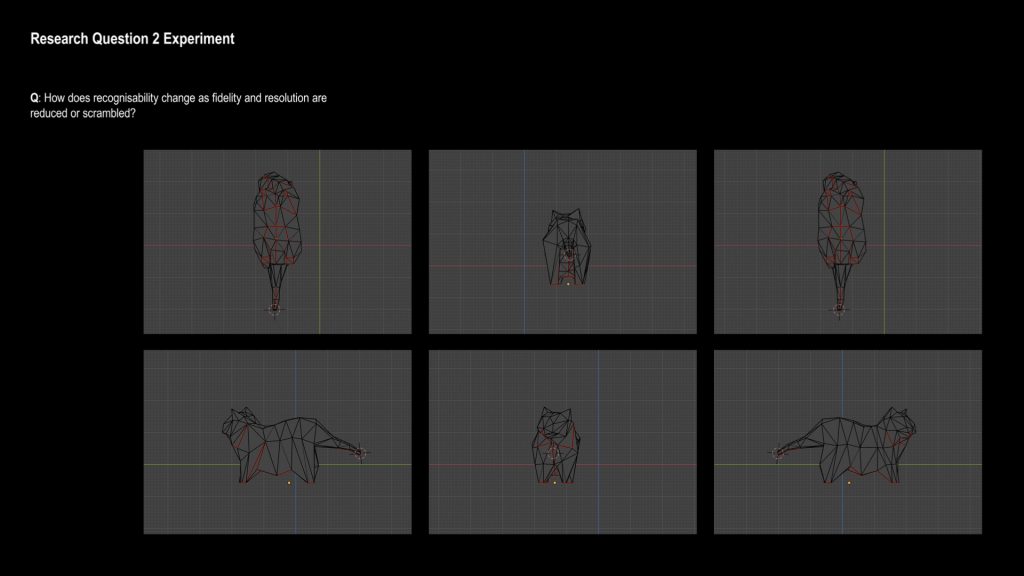

- The second experiment focuses on fidelity, or how much detail we need to recognise something.

- I changed the UV mapping of the same model to see how my understanding of the form would change.

- I tried to unfold the model in a relatively continuous way, using logical seams.

- Draft-P9 – Face as Focus

- I focused mainly on the face, because it’s one of the most recognisable parts of the model.

- Small changes in the face can quickly change how we read the whole object.

- Draft-P10 – Change in Perception

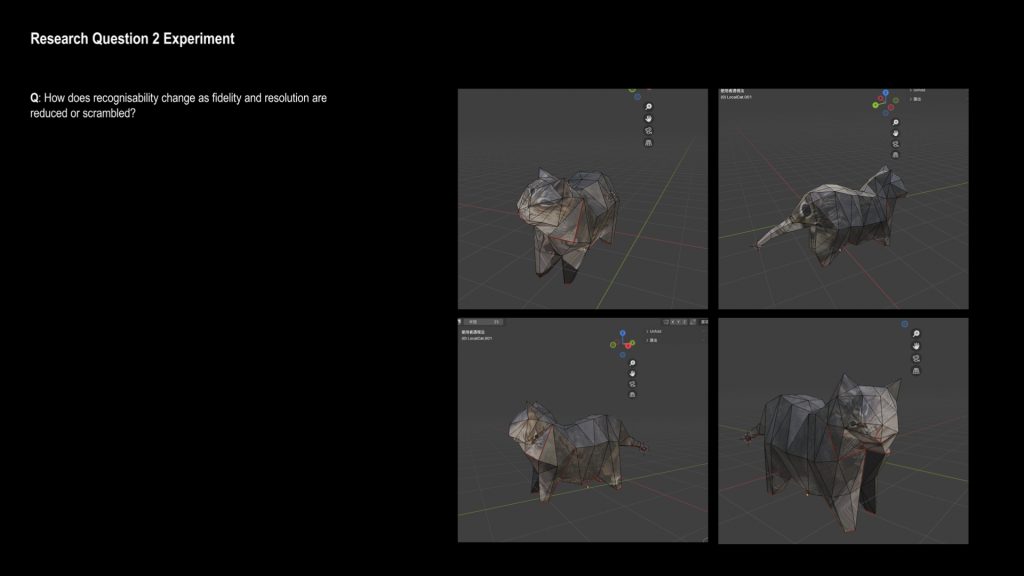

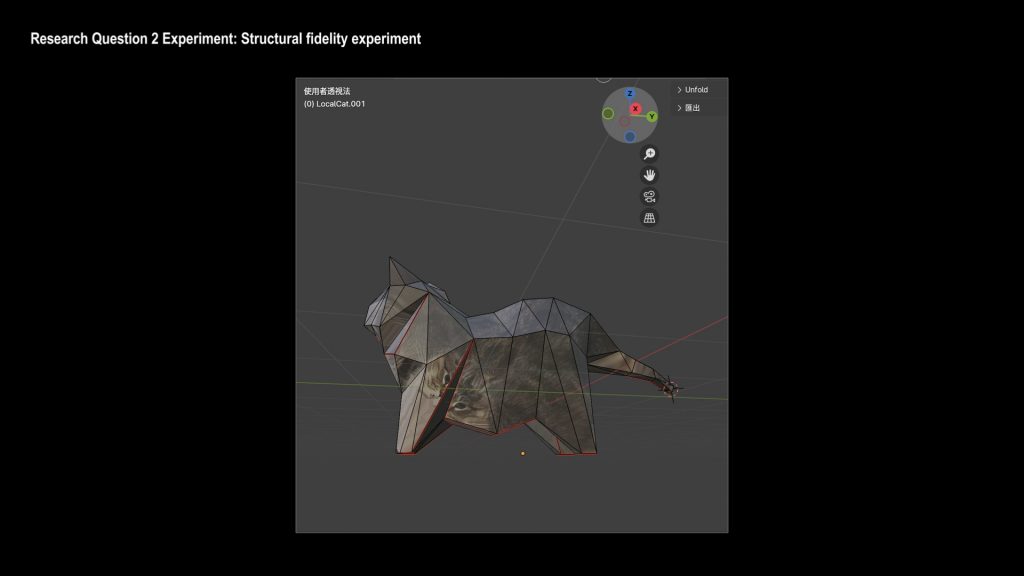

- Here I noticed something interesting.

- (show the video here.)

- From some angles, the texture looks like a flat, continuous surface.

- But because of the seam placement, the second eye is separated, and there’s an extra face between the two eyes.

- As the angle changes, I stop reading the eyes as a flat image,

- and I start to understand them as part of a 3D structure.

- At this moment, my perception shifts from seeing a texture to recognising a form.

- This made me think about whether we recognise images first, or structures first.

- Video available

Pages: 1 2 3 4 5

Leave a Reply